Agent-Ready AI

Infrastructure.

Vast is the infrastructure layer where AI agents autonomously design, procure, and optimize their own compute. API-native provisioning. Real-time pricing. Per-second billing.

Trusted by developers and AI teams worldwide

Real-time GPU infrastructure

Prices set by supply and demand across 20,000+ GPUs. Transparent. Programmatically queryable.

How it works

From sign-up to running GPU workloads in under five minutes.

Add credit & get your API key

Start with as little as $5. Grab your API key from the console — no contracts, no sales calls.

Search GPUs

Filter by model, VRAM, price, and availability — via console or API.

Deploy

Launch instances in seconds. Scale up or down programmatically.

Compare. Launch. Exit. Repeat.

Every GPU on Vast.ai is provisioned through code. The same API that developers use to deploy in seconds is the interface agents use to procure and optimize at scale.

pip install vastaiOne install gives you both the CLI and Python SDK.

The interface agents call to provision infrastructure.

curl -H "Authorization: Bearer $VAST_API_KEY" https://cloud.vast.ai/api/v1/bundles/One platform. Three ways to deploy.

GPU Cloud for full control. Serverless for zero-ops inference. Clusters for large-scale training.

GPU Cloud

On-demand instances across 40+ data centers and 20,000+ GPUs. Deploy in seconds via CLI, SDK, or API.

Serverless

Deploy models as endpoints with automatic benchmarking and optimization across GPU types. Autoscale to zero, pay only for compute time.

Clusters

Dedicated multi-node GPU clusters with InfiniBand networking for large-scale training.

Built for Every AI Workload

From training to inference, fine-tuning to rendering — run any GPU workload on Vast.

Use CasesPopular Models, Ready to Deploy

Launch pre-configured templates for the most popular open-source models.

Kimi K2.6

Kimi K2.6 is an open-source, native multimodal agentic MoE model from Moonshot AI with 1T total parameters, 32B activated, advancing long-horizon coding, coding-driven design, and swarm-based task orchestration

Gemma 4 31B IT

Gemma 4 31B dense vision-language model by Google with 256K context and thinking mode

“Vast.ai reduced our GPU costs by over 60% while giving us the flexibility to scale training jobs on demand. We serve 200K daily users without breaking the bank.”

How teams build on Vast.ai

See how teams use Vast.ai to scale AI infrastructure and accelerate production workloads.

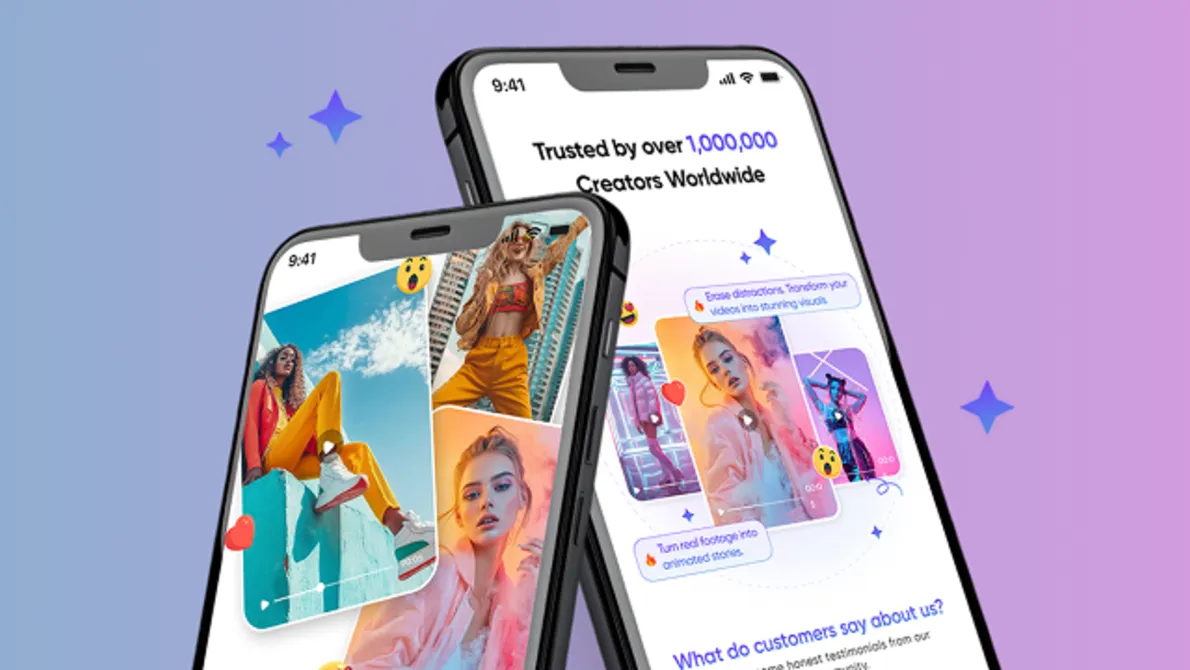

Creatix Technology

Creatix Technology Scales to 200K Daily Users with Vast.ai's GPU Cloud

How a fast-growing AI app company cut infrastructure costs by over 60% and powered millions of new users with Vast.ai.

PAICON

PAICON Accelerates Global, Data-Centric Cancer Diagnostics with Vast.ai

How a global oncology data platform used Vast.ai’s GPU cloud to rapidly iterate on Athena—validating that diversity can matter more than scale—while significantly reducing research-phase training costs.